|

There might be decreases in freedom in the rest of the universe, but the sum of the increase and decrease must result in a net increase. The freedom in that part of the universe may increase with no change in the freedom of the rest of the universe. Statistical Entropy - Mass, Energy, and Freedom The energy or the mass of a part of the universe may increase or decrease, but only if there is a corresponding decrease or increase somewhere else in the universe.Qualitatively, entropy is simply a measure how much the energy of atoms and molecules become more spread out in a process and can be defined in terms of statistical probabilities of a system or in terms of the other thermodynamic quantities. Statistical Entropy Entropy is a state function that is often erroneously referred to as the 'state of disorder' of a system.Phase Change, gas expansions, dilution, colligative properties and osmosis. Simple Entropy Changes - Examples Several Examples are given to demonstrate how the statistical definition of entropy and the 2nd law can be applied.A microstate is one of the huge number of different accessible arrangements of the molecules' motional energy* for a particular macrostate. Instead, they are two very different ways of looking at a system. Microstates Dictionaries define “macro” as large and “micro” as very small but a macrostate and a microstate in thermodynamics aren't just definitions of big and little sizes of chemical systems.“Disorder” was the consequence, to Boltzmann, of an initial “order” not - as is obvious today - of what can only be called a “prior, lesser but still humanly-unimaginable, large number of accessible microstate it was his surprisingly simplistic conclusion: if the final state is random, the initial system must have been the opposite, i.e., ordered. ‘Disorder’ in Thermodynamic Entropy Boltzmann’s sense of “increased randomness” as a criterion of the final equilibrium state for a system compared to initial conditions was not wrong.

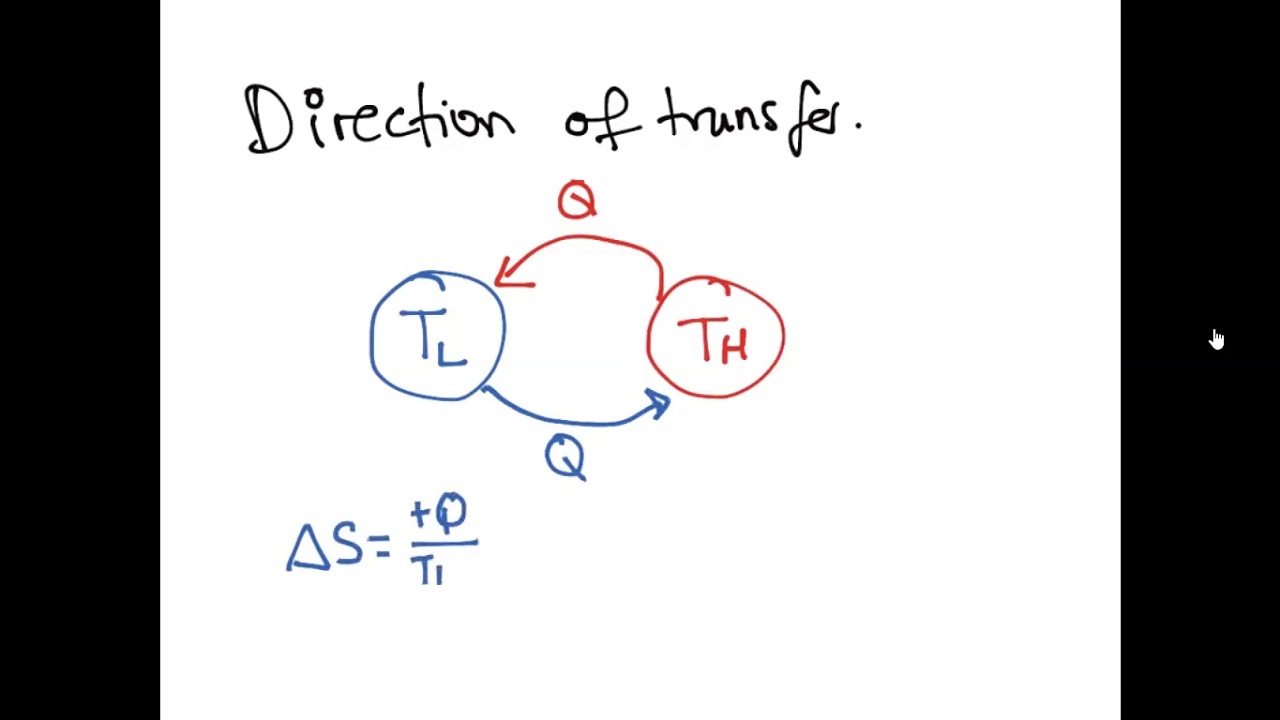

Entropy contained in a system, say in a mole of a pure substance, is a theoretical quantity that takes account of all heat transferred to it since the lowest atainable temperature, 0 K. The more disordered a system is, the higher (the more positive) the value of. By definition, the change in entropy can be evaluated by measuring the amount of energy transferred. It may be interpreted as a measure of the dispersal or distribution of matter and/or energy in a system, and it is often described as. It is a measure of how organized or disorganized energy is in a system of atoms or molecules.\) Entropy can be defined as the randomness or dispersal of energy of a system. Entropy (S) is a state function that can be related to the number of microstates for a system (the number of ways the system can be arranged) and to the ratio of reversible heat to kelvin temperature. Thermodynamic entropy is part of the science of heat energy.Information entropy, which is a measure of information communicated by systems that are affected by data noise.For instance, when a substance changes from a solid to a. It says that entropyis generated(or removed) fromheating(orcooling) asystem. Entropy is a measure of how much the atoms in a substance are free to spread out, move around, and arrange themselves in random ways. Itisathermodynamic, ratherthanstatistical-mechanic, de nition. The meaning of entropy is different in different fields. T Thisde nitionof entropy(change)calledClausiusentropy. These ideas are now used in information theory, statistical mechanics, chemistry and other areas of study.Įntropy is simply a quantitative measure of what the second law of thermodynamics describes: the spreading of energy until it is evenly spread.

For this case, the probability of each microstate of the system is equal, so it was equivalent. Boltzmann's paradigm was an ideal gas of N identical particles, of which Ni are in the i -th microscopic condition (range) of position and momentum.

Some very useful mathematical ideas about probability calculations emerged from the study of entropy. Interpreted in this way, Boltzmann's formula is the most basic formula for the thermodynamic entropy. The word entropy came from the study of heat and energy in the period 1850 to 1900. A law of physics says that it takes work to make the entropy of an object or system smaller without work, entropy can never become smaller – you could say that everything slowly goes to disorder (higher entropy). The higher the entropy of an object, the more uncertain we are about the states of the atoms making up that object because there are more states to decide from. In this sense, entropy is a measure of uncertainty or randomness. Entropy is also a measure of the number of possible arrangements the atoms in a system can have. The entropy of an object is a measure of the amount of energy which is unavailable to do work.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed